Modern data centers no longer handle only traditional Ethernet traffic. Today’s enterprise infrastructure must simultaneously support cloud applications, virtualization platforms, AI workloads, and high-speed storage networks while maintaining low latency, scalability, and operational efficiency. As storage performance requirements increased, organizations began searching for ways to simplify the complexity of running separate LAN and SAN infrastructures.

This is where FCoE (Fibre Channel over Ethernet) became an important networking technology.

FCoE is a converged networking protocol that encapsulates native Fibre Channel frames inside Ethernet packets, allowing storage traffic and standard network traffic to share the same physical Ethernet infrastructure. Instead of maintaining independent Fibre Channel switches, adapters, and cabling alongside Ethernet networks, data centers can transport both types of traffic through a unified high-speed Ethernet environment.

In simple terms, FCoE combines the reliability and storage capabilities of traditional Fibre Channel SANs with the flexibility and scalability of Ethernet networking.

The technology was originally developed to reduce:

Data center cabling complexity

Hardware and infrastructure costs

Power and cooling requirements

Network management overhead

At the same time, FCoE preserves many of the characteristics that enterprise storage environments require, including deterministic performance, low latency, and lossless transport behavior.

Unlike protocols such as iSCSI or NVMe/TCP, FCoE does not convert storage traffic into TCP/IP packets. Instead, it keeps the Fibre Channel protocol intact while transporting it over Ethernet through specialized mechanisms such as Data Center Bridging (DCB) and Priority Flow Control (PFC).

As a result, FCoE became widely adopted in converged data center architectures from vendors such as Cisco, Dell EMC, Brocade, NetApp, and VMware, especially during the transition from traditional Fibre Channel infrastructures toward Ethernet-based storage networking.

Another important aspect of FCoE is its close relationship with modern Ethernet optical connectivity. Because FCoE operates over Ethernet physical layers, it typically uses standard Ethernet optical transceivers such as:

10G SFP+ SR/LR modules

25G SFP28 modules

40G QSFP+ modules

100G QSFP28 modules

DAC and AOC high-speed cabling solutions

This creates a strong connection between FCoE deployment strategies and data center optical module selection, compatibility, and interoperability.

In this guide, you will learn:

What FCoE Fibre Channel over Ethernet is

How FCoE works inside modern data centers

The relationship between FCoE and optical transceivers

How FCoE compares with Fibre Channel, Ethernet, and iSCSI

Which optical modules are commonly used in FCoE networks

The advantages, limitations, and real-world use cases of FCoE

Whether you are a network engineer, system integrator, storage architect, or optical transceiver buyer, understanding FCoE remains valuable for designing efficient, high-performance converged infrastructures in modern enterprise environments.

✅ What Is FCoE Fibre Channel over Ethernet?

FCoE (Fibre Channel over Ethernet) is a storage networking technology that enables native Fibre Channel traffic to be transmitted across high-speed Ethernet networks. Instead of using a completely separate Fibre Channel SAN infrastructure with dedicated switches, adapters, and cabling, FCoE encapsulates Fibre Channel frames inside Ethernet frames, allowing both storage traffic and standard LAN traffic to operate on the same converged network infrastructure. The goal of FCoE is not to replace Fibre Channel itself, but to simplify data center architecture by combining storage and Ethernet networking into a unified transport environment while preserving the low latency, reliability, and lossless characteristics required by enterprise storage systems.

Simple Definition of FCoE

Term | Definition |

|---|---|

FCoE | Fibre Channel over Ethernet |

Main Purpose | Transport Fibre Channel storage traffic over Ethernet |

Core Function | Encapsulates FC frames inside Ethernet frames |

Primary Benefit | Converged LAN and SAN infrastructure |

Typical Environment | Enterprise data centers and storage networks |

Common Speeds | 10G, 25G, 40G, and 100G Ethernet |

Key Requirement | Lossless Ethernet using Data Center Bridging (DCB) |

What FCoE Stands For

FCoE stands for:

Fibre Channel over EthernetThe name directly describes how the technology works:

Fibre Channel (FC) is the traditional high-performance storage networking protocol used in SAN (Storage Area Network) environments.

Over Ethernet means those Fibre Channel frames are transported through Ethernet infrastructure rather than through dedicated Fibre Channel physical networks.

Importantly, FCoE does not convert Fibre Channel into TCP/IP traffic. The original Fibre Channel protocol remains intact throughout transmission. FCoE simply changes the transport layer from native Fibre Channel cabling and switching to Ethernet-based transport.

Why FCoE Exists

Traditional enterprise data centers historically maintained two completely separate network infrastructures:

Network Type | Purpose |

|---|---|

Ethernet LAN | Standard data and application traffic |

Fibre Channel SAN | Storage traffic |

This architecture increased:

Cabling complexity

Switch count

Adapter requirements

Power consumption

Cooling demands

Infrastructure cost

FCoE was introduced to solve this problem through network convergence.

Instead of deploying separate Ethernet NICs and Fibre Channel HBAs inside each server, organizations could use a single converged network infrastructure capable of carrying both traffic types simultaneously.

This approach simplified large-scale data center deployment while reducing operational overhead and improving infrastructure efficiency.

The Simplest Way to Understand FCoE

The easiest way to understand FCoE is:

FCoE allows Fibre Channel storage traffic to travel through Ethernet networks.Or even more simply:

FCoE = Fibre Channel traffic packaged inside Ethernet framesA traditional Fibre Channel network looks like this:

Server → FC Switch → Storage ArrayAn FCoE network looks like this:

Server → Ethernet Switch → Storage ArrayIn an FCoE deployment, the Ethernet network becomes the shared transport platform for both:

Normal IP network traffic

Enterprise storage traffic

Because storage workloads are highly sensitive to packet loss and latency, FCoE environments typically require specialized Ethernet features such as:

Data Center Bridging (DCB)

Priority Flow Control (PFC)

Enhanced Transmission Selection (ETS)

These technologies help create a lossless Ethernet environment capable of supporting enterprise-class storage communication.

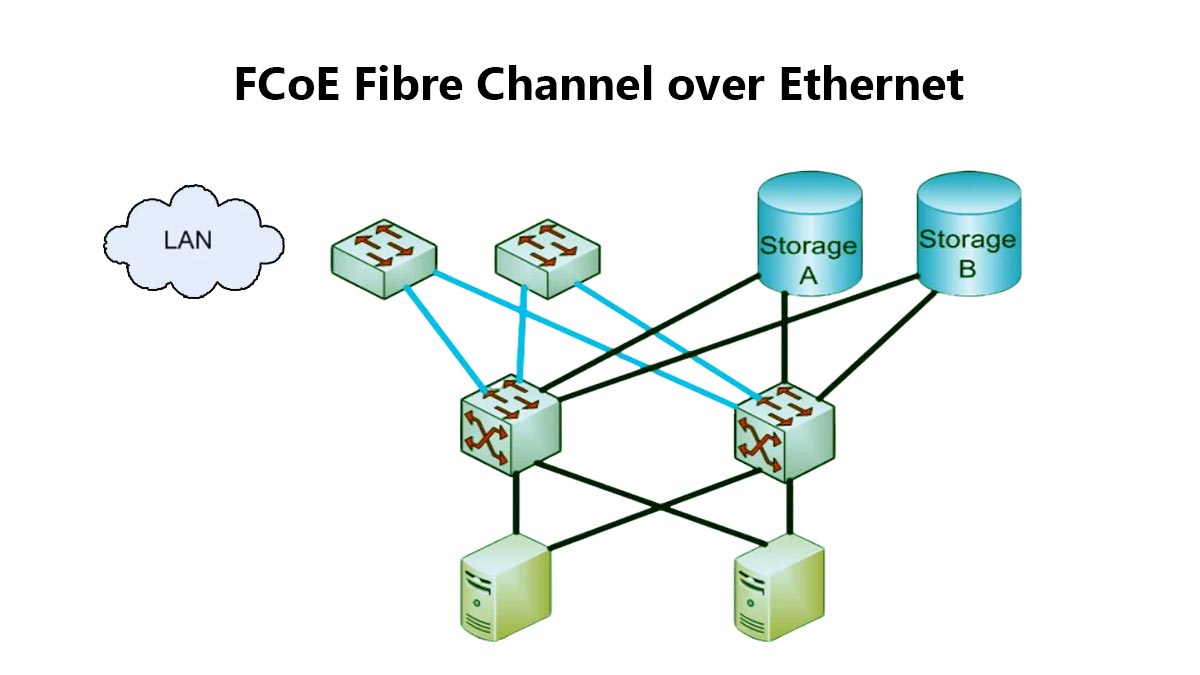

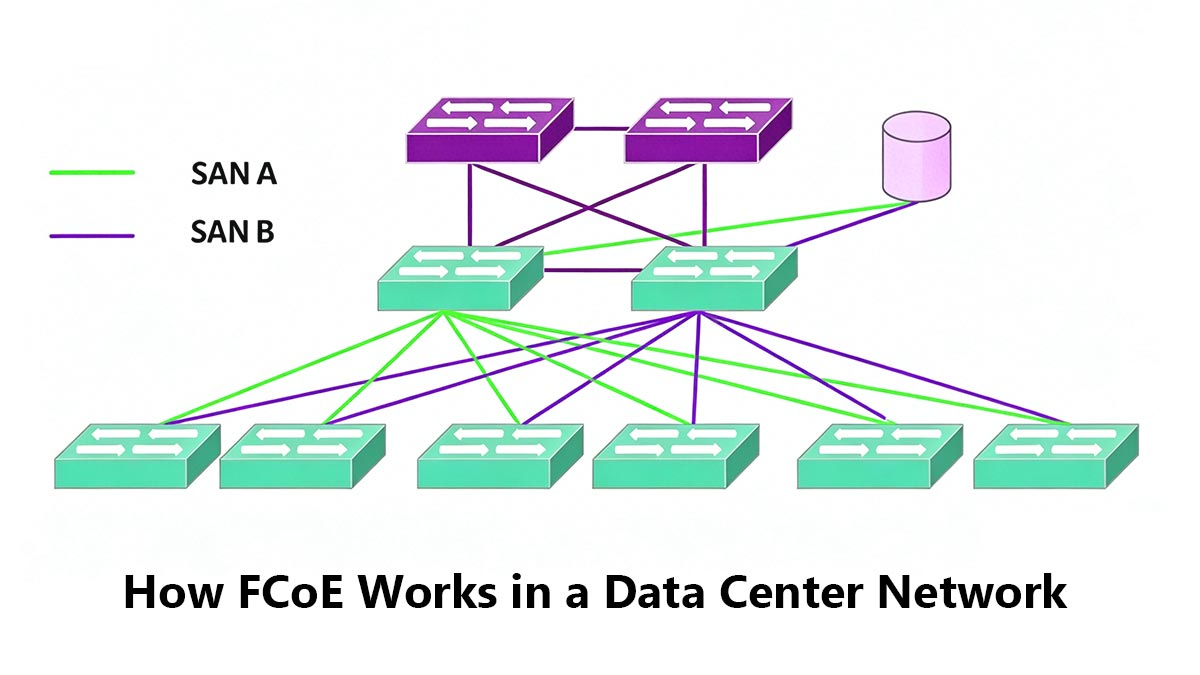

✅ How FCoE Works in a Data Center Network

FCoE works by transporting native Fibre Channel storage traffic across Ethernet networks without converting it into TCP/IP. Instead of using separate Fibre Channel switches and cabling, FCoE encapsulates Fibre Channel frames inside Ethernet frames, allowing both LAN and SAN traffic to share the same high-speed Ethernet infrastructure.

This converged networking model reduces hardware complexity while maintaining the low latency and reliability required for enterprise storage environments.

FC Frames Encapsulated Into Ethernet

The core function of FCoE is Fibre Channel frame encapsulation.

The transmission process works as follows:

Fibre Channel Frame

↓

FCoE Header

↓

Ethernet Frame

↓

Ethernet NetworkUnlike iSCSI or NVMe/TCP, FCoE does not convert storage traffic into TCP/IP packets. The original Fibre Channel protocol remains intact during transport.

Protocol | Transport Method |

|---|---|

Fibre Channel | Native FC Fabric |

FCoE | FC over Ethernet |

iSCSI | SCSI over TCP/IP |

NVMe/TCP | NVMe over TCP/IP |

CNA, Switch, and Storage Path

A typical FCoE deployment follows this path:

Server → CNA → Ethernet Switch → StorageCNA (Converged Network Adapter)

The CNA combines:

Ethernet NIC functionality

Fibre Channel HBA functionality

This allows a single adapter to handle both network and storage traffic.

FCoE-Capable Switch

FCoE switches support technologies such as:

Data Center Bridging (DCB)

Priority Flow Control (PFC)

These switches may also function as:

FCF (Fibre Channel Forwarder)which manages Fibre Channel services inside the Ethernet network.

Why DCB Is Required for Lossless Transport

Traditional Ethernet networks are lossy, meaning packets can be dropped during congestion. Fibre Channel storage traffic, however, requires highly reliable and predictable transport.

To support this, FCoE relies on: Data Center Bridging (DCB)

DCB enhances Ethernet with features designed for lossless transmission.

Key technologies include:

DCB Feature | Purpose |

|---|---|

PFC | Prevents frame loss |

ETS | Allocates bandwidth |

DCBX | Exchanges configuration settings |

These features help Ethernet behave more like a Fibre Channel storage network.

How FCoE Enables Converged Networking

Traditional data centers often required separate connections for LAN and SAN traffic:

Ethernet Network + Fibre Channel SANFCoE allows both to operate on the same Ethernet infrastructure:

LAN + SAN over EthernetThis reduces:

Cabling

Adapter count

Switch complexity

Power consumption

Ethernet Optical Modules Used for FCoE

Because FCoE runs on Ethernet physical layers, it uses standard Ethernet optical transceivers rather than native Fibre Channel optics.

Common FCoE optical modules include:

Ethernet Speed | Typical Modules |

|---|---|

10G | SFP+ SR/LR |

25G | SFP28 SR/LR |

40G | |

100G | QSFP28 SR4/LR4 |

These modules carry both Ethernet traffic and encapsulated Fibre Channel storage traffic across the same network infrastructure.

✅ FCoE vs. Fibre Channel vs. Ethernet vs. iSCSI

FCoE, Fibre Channel, Ethernet, and iSCSI are all used in enterprise networking and storage environments, but they serve different purposes and use different transport methods.

The simplest distinction is:

Fibre Channel (FC) is a dedicated storage networking protocol

FCoE carries Fibre Channel traffic over Ethernet

Ethernet is a general-purpose networking technology

iSCSI transports storage traffic over TCP/IP networks

Understanding these differences helps organizations choose the right infrastructure for performance, scalability, and cost.

FCoE vs. Fibre Channel

Traditional Fibre Channel uses a dedicated SAN infrastructure with:

FC switches

FC HBAs

FCoE simplifies deployment by transporting Fibre Channel frames across Ethernet networks instead of native FC fabrics.

Feature | Fibre Channel | FCoE |

|---|---|---|

Transport Network | Native FC Fabric | Ethernet |

Infrastructure | Separate SAN | Converged LAN + SAN |

Optical Modules | FC Optics | Ethernet Optics |

Protocol | Native FC | FC Encapsulated in Ethernet |

FCoE preserves the Fibre Channel protocol while changing the transport layer to Ethernet.

FCoE vs. Ethernet

Ethernet is designed for general data communication, while FCoE is optimized for storage traffic.

Unlike standard Ethernet, FCoE requires lossless transport technologies such as:

Data Center Bridging (DCB)

Priority Flow Control (PFC)

Feature | Ethernet | FCoE |

|---|---|---|

Purpose | General Networking | Storage Networking |

Packet Loss | Allowed | Controlled |

Transport Type | Ethernet Frames | FC over Ethernet |

Requires DCB | No | Yes |

In simple terms:

FCoE uses Ethernet infrastructure for storage networking.FCoE vs. iSCSI

Both FCoE and iSCSI transport storage traffic over Ethernet, but they use different protocols.

iSCSI uses TCP/IP

FCoE preserves native Fibre Channel communication

Feature | FCoE | iSCSI |

|---|---|---|

Protocol | Fibre Channel | TCP/IP |

Transport | FC over Ethernet | SCSI over TCP/IP |

Network Type | Lossless Ethernet | Standard Ethernet |

Latency | Lower | Higher |

Deployment Complexity | Higher | Lower |

iSCSI is typically easier and less expensive to deploy, while FCoE is commonly used in enterprise SAN environments that require low latency and integration with existing Fibre Channel infrastructure.

Optical Module Differences

Traditional Fibre Channel networks use dedicated FC optical modules such as:

8G FC SFP+

16G FC SFP+

32G FC SFP28

FCoE networks use standard Ethernet optical transceivers, including:

10G SFP+ SR/LR

25G SFP28

40G QSFP+

100G QSFP28

Because FCoE operates on Ethernet physical layers, Ethernet optical modules are required instead of native Fibre Channel optics.

✅ Common Optical Modules Used in FCoE Networks

Because FCoE operates on Ethernet physical layers, it uses standard Ethernet optical transceivers rather than native Fibre Channel optics. The choice of optical module depends on factors such as network speed, transmission distance, switch compatibility, and data center architecture.

In most deployments, FCoE networks prioritize:

Low latency

Stable link performance

High interoperability

Enterprise switch compatibility

Reliable lossless Ethernet operation

The following optical modules are commonly used in modern FCoE environments.

10G SFP+ Modules for Classic FCoE Deployments

10G Ethernet was the most widely adopted platform during the early growth of FCoE, especially in enterprise blade server and converged infrastructure deployments.

Common 10G FCoE optical modules include:

Module Type | Fiber Type | Typical Reach |

|---|---|---|

10GBASE-SR SFP+ | Multimode Fiber (OM3/OM4) | Up to 300 m |

10GBASE-LR SFP+ | Single-Mode Fiber (OS2) | Up to 10 km |

10G SFP+ modules are commonly used with:

Cisco UCS

VMware environments

Enterprise SAN convergence

Top-of-rack (ToR) switching

For short in-rack connections, many deployments also use:

10G DAC cables

10G AOC cables

to reduce cost and power consumption.

25G SFP28 Modules for Newer Converged Networks

As data center bandwidth requirements increased, many organizations migrated from 10G to 25G Ethernet.

25G SFP28 modules provide:

Higher bandwidth

Better lane efficiency

Lower cost per gigabit

Improved scalability

Common options include:

Module Type | Fiber Type | Typical Reach |

|---|---|---|

25GBASE-SR SFP28 | Multimode Fiber | Up to 100 m |

25GBASE-LR SFP28 | Single-Mode Fiber | Up to 10 km |

25G FCoE deployments are common in:

Modern enterprise data centers

Virtualized storage networks

High-density server environments

40G QSFP+ Modules for Higher-Density Links

40G QSFP+ modules are often used for aggregation and switch uplink connections in converged FCoE infrastructures.

Typical modules include:

Module Type | Fiber Type | Typical Reach |

|---|---|---|

40GBASE-SR4 QSFP+ | Multimode Fiber | Up to 150 m |

40GBASE-LR4 QSFP+ | Single-Mode Fiber | Up to 10 km |

These modules are commonly deployed in:

Spine-leaf architectures

Aggregation layers

High-density switch interconnects

40G links can consolidate multiple lower-speed server connections into fewer high-bandwidth uplinks.

100G QSFP28 Modules for Modern Data Center Backbones

100G Ethernet is increasingly used in large-scale converged infrastructures and modern data center backbones.

Common 100G FCoE optical modules include:

Module Type | Fiber Type | Typical Reach |

|---|---|---|

100GBASE-SR4 QSFP28 | Multimode Fiber | Up to 100 m |

100GBASE-LR4 QSFP28 | Single-Mode Fiber | Up to 10 km |

100G QSFP28 modules are ideal for:

Core data center switching

High-performance storage fabrics

Cloud-scale infrastructure

Large virtualization clusters

These higher-speed links help support increasing storage traffic volumes while reducing overall port density requirements.

DAC and AOC Options for Short-Reach Links

In many FCoE deployments, especially inside racks or between adjacent racks, DAC and AOC solutions are often more cost-effective than traditional optical transceivers.

DAC (Direct Attach Copper)

DAC cables are passive or active copper cables with integrated connectors.

Advantages include:

Lower cost

Very low latency

Reduced power consumption

Typical use cases:

Server-to-switch connections

Short top-of-rack links

AOC (Active Optical Cable)

AOCs combine optical fiber with integrated transceiver technology.

Advantages include:

Longer reach than DAC

Lower cable weight

Better EMI resistance

Typical use cases:

Cross-rack connections

Medium-distance high-speed links

Choosing the Right Optical Module for FCoE

When selecting optical modules for FCoE networks, important considerations include:

Selection Factor | Importance |

|---|---|

Switch Compatibility | Ensures interoperability |

Transmission Distance | Determines module type |

Network Speed | Matches bandwidth requirements |

Fiber Type | |

Latency and Stability | Critical for storage traffic |

DCB Environment Support | Required for reliable FCoE operation |

Because FCoE environments are highly sensitive to network reliability, enterprise-grade Ethernet optical transceivers with stable performance and broad switch compatibility are typically preferred.

✅ Key Requirements for FCoE Performance and Stability

FCoE environments are more sensitive to network quality than standard Ethernet networks because storage traffic requires predictable latency, reliable delivery, and stable long-term operation. Although FCoE uses Ethernet infrastructure, enterprise deployments typically demand higher standards for switch configuration, optical module quality, and overall network interoperability.

To maintain stable FCoE performance, organizations must focus on several critical deployment factors.

DCB and Lossless Ethernet

One of the most important requirements for FCoE is: Lossless Ethernet

Traditional Ethernet networks allow packet drops during congestion, but Fibre Channel storage traffic is designed for highly reliable transport.

To support this behavior, FCoE relies on: Data Center Bridging (DCB)

DCB is a collection of Ethernet enhancements that help create a more predictable and lossless environment.

Key DCB technologies include:

Technology | Function |

|---|---|

Priority Flow Control (PFC) | Prevents frame loss during congestion |

Enhanced Transmission Selection (ETS) | Allocates bandwidth between traffic classes |

DCBX | Exchanges DCB configuration information |

Without properly configured DCB, FCoE storage traffic may experience instability, congestion issues, or packet loss.

Low Latency and Low Error Rate

Storage traffic is highly sensitive to latency variation and transmission errors.

For stable FCoE operation, networks should maintain:

Low latency

Low jitter

Low bit error rate (BER)

Stable optical signal quality

Poor-quality links can lead to:

Frame retransmissions

Performance degradation

Storage access interruptions

Link instability

Because of this, enterprise FCoE deployments typically prioritize high-quality Ethernet optical modules and reliable cabling infrastructure.

Switch Compatibility

FCoE requires Ethernet switches that support:

DCB

PFC

FCoE forwarding features

Common enterprise platforms include:

Cisco Nexus

Dell EMC

Brocade

HPE

Compatibility is especially important because some switches enforce strict transceiver validation policies through EEPROM checks and vendor coding requirements.

In many production environments, using unsupported optical modules may result in:

Warning messages

Link failures

Reduced stability

Disabled monitoring functions

Module Interoperability and Vendor Certification

Although FCoE uses standard Ethernet optical transceivers, interoperability remains critical in enterprise storage networks.

When selecting optical modules for FCoE deployments, organizations typically evaluate:

Requirement | Importance |

|---|---|

Vendor compatibility | Ensures switch recognition |

Stable DOM/DDM monitoring | Supports troubleshooting |

Low BER performance | Improves storage reliability |

Thermal stability | Supports long-term operation |

Enterprise qualification | Reduces deployment risk |

For this reason, many data centers prefer:

instead of uncertified generic transceivers.

In FCoE environments, stable interoperability is often more important than simply achieving link connectivity.

✅ FAQ About FCoE Fibre Channel over Ethernet

1. Is FCoE Still Used Today?

Yes. FCoE is still used in many enterprise data centers, especially in environments that already rely on Fibre Channel SAN infrastructure and converged networking architectures.

Although newer technologies such as NVMe/TCP and RoCE are becoming more common in cloud and hyperscale environments, FCoE remains relevant for:

Enterprise storage networks

Cisco UCS deployments

Virtualized data centers

Converged LAN and SAN infrastructures

2. Does FCoE Require Special Optical Modules?

No. FCoE typically uses standard Ethernet optical modules rather than dedicated Fibre Channel optics.

Common FCoE optical transceivers include:

10G SFP+ SR/LR

25G SFP28 SR/LR

40G QSFP+

100G QSFP28

However, enterprise FCoE environments often require:

Better switch compatibility

Lower error rates

Stable DCB operation

Reliable interoperability

Therefore, enterprise-grade Ethernet optical modules are generally preferred.

3. Is FCoE the Same as Fibre Channel?

No. FCoE and Fibre Channel are closely related, but they are not the same technology.

Traditional Fibre Channel uses a dedicated FC SAN infrastructure, while FCoE transports Fibre Channel traffic across Ethernet networks.

The key difference is:

Technology | Transport Network |

|---|---|

Fibre Channel | Native FC Fabric |

FCoE | Ethernet |

FCoE preserves the native Fibre Channel protocol while changing the physical transport layer to Ethernet.

4. Can Standard Ethernet Switches Support FCoE?

Not always.

FCoE requires Ethernet switches that support:

Data Center Bridging (DCB)

Priority Flow Control (PFC)

FCoE forwarding capabilities

Standard unmanaged Ethernet switches typically do not support these features.

Enterprise switches commonly used for FCoE include:

Cisco Nexus

Dell EMC switches

Brocade data center switches

These platforms are designed to support the lossless Ethernet environment required for stable FCoE storage traffic.

✅ When to Use FCoE Protocol

FCoE was developed to simplify enterprise storage networking by combining Fibre Channel SAN traffic and Ethernet LAN traffic into a single converged infrastructure. While newer Ethernet-based storage technologies continue to evolve, FCoE still remains valuable in specific enterprise environments where low latency, storage reliability, and SAN integration are important.

The best deployment choice depends on factors such as existing infrastructure, scalability requirements, operational complexity, and long-term data center strategy.

Best-Fit Scenarios for FCoE

FCoE is most suitable for organizations that already operate Fibre Channel storage environments but want to reduce infrastructure complexity and cabling overhead.

Typical FCoE deployment scenarios include:

Enterprise data centers with existing FC SAN infrastructure

Cisco UCS converged networking environments

Virtualized server clusters

Blade server architectures

High-density rack deployments

Organizations migrating from traditional FC to Ethernet-based infrastructures

FCoE is especially useful when companies want to preserve Fibre Channel storage performance while simplifying physical network deployment.

Its main advantages include:

Reduced cabling

Fewer adapters and switch ports

Simplified infrastructure management

Lower power and cooling requirements

Shared Ethernet optical connectivity

Because FCoE operates on Ethernet physical layers, organizations can also leverage standard Ethernet optical transceivers such as:

for converged network deployments.

When Fibre Channel Still Makes Sense

Traditional Fibre Channel remains a strong choice for highly specialized enterprise SAN environments that prioritize maximum stability, deterministic performance, and long-established operational practices.

Native FC SANs are still commonly used in:

Large enterprise storage networks

Mission-critical database systems

Financial institutions

Legacy SAN environments

High-performance storage fabrics

Advantages of traditional Fibre Channel include:

Mature SAN ecosystem

Dedicated storage isolation

Extremely predictable performance

Proven long-term reliability

However, FC infrastructure typically requires:

Separate FC switches

Dedicated FC optical modules

Independent SAN cabling

Higher deployment complexity

For organizations heavily invested in existing Fibre Channel architectures, continuing with native FC may still be the most practical option.

When iSCSI or NVMe/TCP May Be a Better Choice

In many modern cloud and hyperscale environments, organizations increasingly choose IP-based storage protocols such as:

iSCSI

NVMe/TCP

instead of FCoE.

These protocols are often preferred because they:

Operate on standard Ethernet networks

Require less specialized configuration

Simplify large-scale deployment

Integrate easily with cloud infrastructure

Reduce operational complexity

iSCSI is commonly selected for:

Small and medium business storage

Cost-sensitive deployments

General-purpose virtualization

NVMe/TCP is becoming increasingly important for:

High-speed flash storage

AI infrastructure

Modern software-defined data centers

Scalable cloud architectures

Compared with FCoE, these technologies generally offer simpler deployment models and broader ecosystem adoption in newer environments.

Final Thoughts

FCoE remains an important technology in the evolution of converged data center networking. It bridges the gap between traditional Fibre Channel SAN infrastructure and modern Ethernet-based architectures by allowing storage traffic and network traffic to share the same physical Ethernet environment.

Although newer storage networking technologies continue to grow, FCoE still provides real value in enterprise environments that require:

Fibre Channel compatibility

Converged networking

Low-latency storage communication

Simplified infrastructure deployment

For stable FCoE operation, selecting reliable Ethernet optical transceivers and compatible networking hardware is critical.

If you are building or upgrading a converged Ethernet storage network, the LINK-PP Official Store offers enterprise-grade optical transceivers, DAC/AOC solutions, and high-speed connectivity products designed for modern data center and FCoE networking environments.